As a small Chinese company releases a new AI chatbot, stock market values have fallen and grandiose claims have been made. What sets it apart?

China’s latest DeepSeek AI chatbot app is proving to be a technology disrupter. It quickly became the most downloaded free iOS application in just 36 hours, overtaking Open AI’s chatbot GPT which previously held the title, with US chip company Nvidia losing nearly $600bn (£483bn) in market value in a single day; a record for the US stock market.

What accounts for this upheaval? Fetishized A group of researchers has also developed a more powerful LLM model in terms of its capacity for understanding reasoning than its US counterparts such as those of OpenAI, but with a small fraction of the cost to train and run.

Andrew Duncan is the Science and Innovation Director for Fundamental AI at the Alan Turing Institute in London.

DeepSeek claims to have done so by employing several technical methods which have substantially reduced both the time complexity of learning the model named R1, and the memory footprint of storing it.

DeepSeek claims that these cutbacks have resulted in substantial cost-savings. The base version, R1 V3, leant across 2.788 million hours of training on multiple graphical processing units (GPUs) concurrently, with a price tag under $6 million (£4.8 million), it’s claimed.

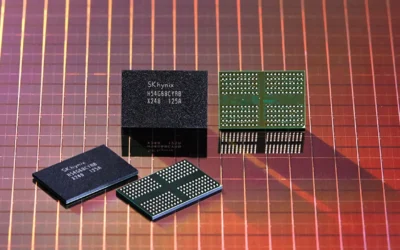

However, OpenAI CEO Sam Altman says that the training of GPT-4 cost in excess of $100m (£80m). Nvidia’s stock is down, but according to their own people, the DeepSeek models were trained on around 2,000 Nvidia H800 GPUs.

These chips are slightly different than the commonly used H100 chip to conform to export restrictions to China. It appears likely that these chips were accumulated before the Biden administration tightened its restrictions in October 2023, essentially forbidding Nvidia from shipping H800s to China.

In view of these limitations, DeepSeek must have been forced to identify novel strategies to get the most out of its limited resources. Reducing the energy costs of training and executing models could also go a long way to alleviating concerns over the environmental impacts of AI.

The data centres behind them are highly energy and water intensive, mostly to keep the servers cool so they don’t fry. Tech companies rarely disclose the carbon footprint of their model operations, but if one recent estimate is even close to accurate, ChatGPT’s monthly output of carbon dioxide exceeds 260 tons — as much as 260 flights from London to New York. Thus, as a step forward in environmental terms, it is an important progress for the AI industry to improve the efficiency of AI models.

Then, of course, there’s the question of whether DeepSeek’s model will actually result in energy savings, or whether cheaper, more efficient A.I. will simply incentivize more people to use it, ballooning the amount of energy used. At the very least, it may serve to promote sustainable AI further up the agenda in the Paris AI Action Summit of I believe we need smarter, cleaner AI tools in the future, as well as a cleaner planet. DeepSeek shocked many by suddenly coming up with a strong, large-scale language model. Founded in 2023, the company is now celebrated in China as an “AI hero,” a term that echoes the country’s slogans toward its fast-growing technology companies.

The model is constructed from an ensemble of smaller models that specialize in different expertises.

The most recent version of DeepSeek is unusual in that its creators released the “weights” (numeric parameters that result from training) and a technical paper of how the system was developed. This transparency opens the model for other groups to independently run it on their systems and adapt it for alternative uses.

What’s more, it’s open to researchers around the world to study how the model makes its decisions, unlike OpenAI’s previous o1 and o3 models, which act as black boxes. We are still missing some pieces though, in particular the data, as well as the code (the assemblies of the trained models) that researchers have been trying to fill in.

Not all of DeepSeek’s efficiency tricks are new — though some of them have been tried in other LLMs. In 2023 Mistral AI demonstrated its Mixtral 8x7B model to the public, with performance similar to the state-of-the-art models of the time. Both Mixtral and DeepSeek’s models are “mixture of experts” based models, i.e., the overall model is treated as collection of smaller models specialized in different directions.

Given an input, the mixture model identifies who is the right “expert” to have an answer. DeepSeek also reported an unsuccessful attempt to improve LLM deliberation based on other technical approaches (e.g., Monte Carlo Tree Search (MCTS), a method that has been viewed as a potential way to improve LLMs reasoning for a long time). “From the information we’ve already learned about your model, researchers are going to figure out how the model’s already astonishing problem-solving capabilities can be enhanced still further—a breakthrough that is expected to make its way into the next generation of AI models.

What does all this mean for the AI industry going forward? DeepSeek seems to be demonstrating that you don’t need heaps of resources to build sophisticated AI models. I think we are going to see increasingly powerful AI models trained on increasingly smaller allowances as companies hit on ways to make the process of training and running models more efficient.

So far, the AI world has been dominated by “Big Tech” firms in the US, with Donald Trump calling the rise of DeepSeek a “wake-up call” for US tech industry. “However, that trend isn’t necessarily bad news in a long-term sense for firms such as Nvidia — because the financial and time cost of developing AI products is falling, both business and government are more likely to attempt adopting it.

That will then spur demand for new products — as well as the chips that will power them — and over again. Chances are, we’ll see smaller companies much like DeepSeek, being a part of contributing to the creation of AI tools that could make our lives better. Any discounting of this potential is ill-advised.