With this, NVIDIA Blackwell GPU continues to dominate the AI inference race, and brings unparalleled profit margin along with the highest AI inference performance in the industry. Source: Morgan Stanley Research New research data from Morgan Stanley Research shows that companies leveraging NVIDIA Blackwell GPU are achieving profit margins as high as 78%, miles higher than competitors like AMD, Google, and Huawei.

NVIDIA Blackwell GPU The GB200 NVL72 platform — this delivers a true profit margin of 77.6% $3B + $500M profit approx. This performance arises from the integration of NVIDIA’s high-performance hardware and AI software stack in the form of CUDA that has been tuned over time for Maximum Efficiency. On the FP4 front, it means that NVIDIA Blackwell GPU can enjoy a better performance per watt and provides quarterly incremental uplifts akin to the “fine wine” treatment which has been seen for existing architectures like Hopper.

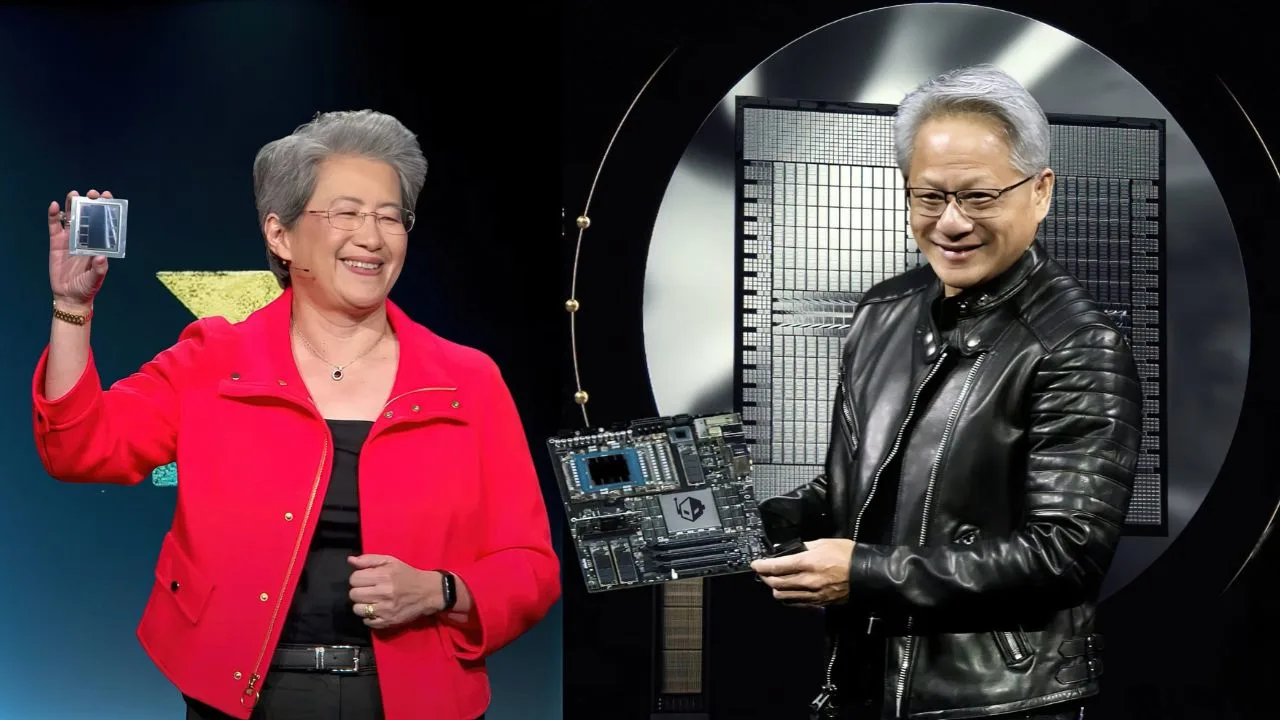

By contrast, a pod of Google TPUs v6e has a 74.9% margin, and AWS Trn2 Ultraserver comes next with 62.5%. AMD, on the other hand, doesn’t do well with the AI inference market. The advanced MI355X platform exhibits a dismal profit margin of negative 28.2%, while the more mature MI300X platform is even worse at negative 64%. The discrepancy is even more evident when looking at revenue per chip per hour — $7.5/hr for a NVIDIA Blackwell GPU vs $1.7/hr for AMD’s MI355X.

Far from stopping at our profit share, the NVIDIA Blackwell GPU hits revenue efficiency records. The HGX H200 from NVIDIA commands $3.7 per hour, as opposed to the competition that mostly situated between $0.5 to $2.0 per hour. These results validate the position of NVIDIA as a sole player in AI inference performance.

While strong on hardware, specifically the MI300 and MI355X platforms, AMD still lacks the software to be competitive. Then, they point out that the TCO (total cost of ownership) of AMD’s MI300X is described as costing $744 million and is nearly equal to NVIDIA’s GB200 platform at $800 million — this is far from exact figures in HC3M results, but it seems most of the figures are based on descriptions using similar terms, making direct comparison quite difficult to do correctly. But even so, the performance of NVIDIA Blackwell GPU is incomparable to the touch of disadvantages, which greatly increases the long-term benefits of enterprises.

The latter pair of new-generation deals are $588 million at total cost of ownership (TCO) for new AMD MI355X servers, on a par with Huawei’s CloudMatrix 384. Even so, with the same investment, NVIDIA manages to achieve a greater capability by the Blackwell GPU, due to its better software ecosystem and more focused inference optimization. That edge is the reason NVIDIA continues to dominate a market that is expected to represent 85% of AI computing in the next few years.

Moving forward, NVIDIA has the Blackwell Ultra GPU on the horizon, which is expected to offer us a 50% improvement compared to the existing NVIDIA Blackwell GPU GB200. It also features Rubin, Rubin Ultra, and Feynman in 2026. AMD, on the other hand, will aim to match NVIDIA next year with its MI400 series targetting the AI inference performance gap.

That unique combination of hardware prowess, software superiority, and the highest AI inference profit margins will enable NVIDIA Blackwell GPU to hang around for quite some time. Thus, by maximizing CUDA optimizations and ensuring FP4 support, NVIDIA guarantees that its Blackwell GPUs are the go-to choice for AI factories around the globe — and that AMD will have an uphill climb in this monumental struggle.

FAQ

NVIDIA Blackwell GPU with FP4 support is complemented by software optimizations in CUDA, helping to provide unparalleled AI inference performance and profitability.

Delivering the AI inference industry’s highest profit margin of 77.6%, the NVIDIA Blackwell GPU GB200 NVL72 platform

NVIDIA Blackwell GPU is obtaining strong profits making it the Market Leader while AMD threshold MI355X platform negative margin -28.2%.